Dependencies for Helm releases in FluxCD

An example of a dependency between a backend service and a Redis service and how to set up and configure FluxCD to manage dependencies between Helm releases

FluxCD is a popular CNCF-graduated GitOps tool that allows you to automate and manage your Kubernetes deployments in an automated process.

In this post, we will look at an example of a dependency between a backend service and a Redis service and how to set up and configure FluxCD to manage dependencies between Helm Releases.

Prerequisites

- Kubernetes Cluster

- Helm v3

HelmRelease dependencies

Let’s consider an example scenario where the apps have the dependency: Backend service → Redis Service.

If the backend service starts without the Redis pod being ready, it will not be able to read requested data in Redis and starts to throw errors like this:

"msg":"cache server is offline","error":"dial tcp redis:6379: connect: connection refused"Here, we’ll use podinfo as our Backend service and Redis as a cache service.

One can define a dependency between Helm Releases using the below parameter in the helm Release manifest :

dependsOn:

- name: <release name>Steps

Configure flux

- Install the Flux CLI using the below command :

curl -s https://fluxcd.io/install.sh | sudo bash- Install flux controllers in our cluster which will manage helm releases :

flux install

--namespace=flux-system

--components=source-controller,helm-controllerConfigure apps

- Add Bitnami Helm repository to the cluster via Flux required for Redis service :

flux create source helm bitnami

--namespace=default

--url=https://charts.bitnami.com/bitnami

--interval=15m- Add backend service’s Helm repository to the cluster via Flux required for backend service :

flux create source helm backend

--namespace=default

--url=https://stefanprodan.github.io/podinfo

--interval=15mThis will create a resource type HelmRepository and it will fetch the latest helm chart releases every 15 min.

kubectl get helmrepository

NAME URL AGE READY STATUS

backend https://stefanprodan.github.io/podinfo 2m True stored artifact for revision 'fd69a2'

bitnami https://charts.bitnami.com/bitnami 2m True stored artifact for revision '423580'- Let’s create the

HelmReleaseYAML for backend service :

cat <<EOF > backend-release.yaml

apiVersion: helm.toolkit.fluxcd.io/v2beta1

kind: HelmRelease

metadata:

name: backend

namespace: default

spec:

interval: 5m

chart:

spec:

chart: podinfo

version: 6.2.2

sourceRef:

kind: HelmRepository

name: backend

dependsOn:

- name: redis

values:

cache: "tcp://redis-master:6379"

EOF

- Now, create the

HelmReleaseYAML for Redis service :

cat <<EOF > redis-release.yaml

apiVersion: helm.toolkit.fluxcd.io/v2beta1

kind: HelmRelease

metadata:

name: redis

namespace: default

spec:

interval: 5m

chart:

spec:

chart: redis

version: 17.3.14

sourceRef:

kind: HelmRepository

name: bitnami

values:

architecture: standalone

auth:

enabled: false

EOF- In the above manifest, we’re installing backend service and Redis helm charts from the Helm repository which we created earlier in Flux using

Sourcedefinition. - We have kept the sync / reconcile interval to be

5m. - We’ve also applied a few custom helm values like Redis service’s address in the backend service and installing Redis as a standalone master instead of Master-Slave architecture.

- Finally, we’ve defined that the backend service depends on Redis using

dependsOn, which means Backend will be reconciled when the Redis service release status is READY.

- Apply both the helm release manifest files :

kubectl apply -f redis-release.yaml -f backend-release.yamlLet’s check the status of releases :

flux get helmreleases -n default

NAME REVISION SUSPENDED READY MESSAGE

backend 6.2.2 False True Release reconciliation succeeded

redis 17.3.14 False True Release reconciliation succeededWe can also see from Helm controller pod’s log that the backend service got deployed after Redis got ready :

"level":"info","msg":"all dependencies are ready,

proceeding with release","HelmRelease":"name":"backend","namespace":"default"Note: By default. Flux follows Helm’s–wait. By using this, Helm will wait until all pods of the deployment are in a ready state before marking the release as successful. Read more from the Helm doc here.

Release failure

In case of release failure, for example, if Redis release has a failure and the pod is not ready, the backend service will not be deployed.

To test this, uninstall both the helm release using the below command ;

kubectl delete -f redis-release.yaml -f backend-release.yamlUpdate the Redis Manifest with the image tag to a non-existent one so that pod will be in Crash Loop.

values:

architecture: standalone

auth:

enabled: false

image:

tag: 1.0Apply the Helm release and check the release status :

kubectl get helmreleases

NAME AGE READY STATUS

backend 6m False dependency 'default/redis' is not ready

redis 6m False install retries exhaustedCross namespace and multi-release dependency

You can also define multiple Helm releases name in the dependency block, as the dependsOn property is an array of Helm release names that the current Helm release depends on.

For example :

dependsOn:

- name: redis

- name: mysqlTo reference the Helm release in different namespace, use the following syntax : <namespace>/<name>, for example :

dependsOn:

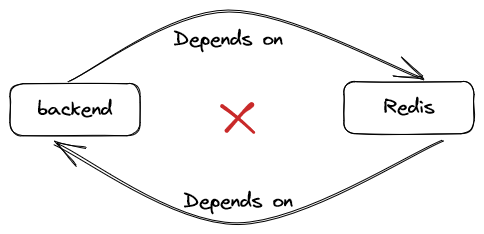

- name: monitoring/prometheusAvoid dependency death loop

Circular dependencies between HelmRelease resources must be avoided, otherwise, the interdependent HelmRelease resources will never be reconciled.

To understand this, I’ll update the Backend service’s YAML and add the dependency config which will turn into:

- Once I apply the manifests, let’s check the status of helm releases :

flux get helmreleases -n default

NAME REVISION SUSPENDED READY MESSAGE

backend False False dependency 'default/redis' is not ready

redis False False dependency 'default/backend' is not readyLet’s check Kubernetes events to see the reason for the helm release to be not ready. Alternatively, you can describe the helmrelease too.

info HelmChart 'default/default-redis' is not ready

info dependencies do not meet ready condition (dependency 'default/backend' is not ready)

info HelmChart 'default/default-backend' is not ready

info dependencies do not meet ready condition (dependency 'default/redis' is not ready)- As you can see, this dependency loop will never get resolved as each release is dependent on the other.

References :

https://fluxcd.io/flux/components/#helmrelease-dependencies

Aviator: Automate your cumbersome merge processes

Aviator automates tedious developer workflows by managing git Pull Requests (PRs) and continuous integration test (CI) runs to help your team avoid broken builds, streamline cumbersome merge processes, manage cross-PR dependencies, and handle flaky tests while maintaining their security compliance.

There are 4 key components to Aviator:

- MergeQueue – an automated queue that manages the merging workflow for your GitHub repository to help protect important branches from broken builds. The Aviator bot uses GitHub Labels to identify Pull Requests (PRs) that are ready to be merged, validates CI checks, processes semantic conflicts, and merges the PRs automatically.

- ChangeSets – workflows to synchronize validating and merging multiple PRs within the same repository or multiple repositories. Useful when your team often sees groups of related PRs that need to be merged together, or otherwise treated as a single broader unit of change.

- FlakyBot – a tool to automatically detect, take action on, and process results from flaky tests in your CI infrastructure.

- Stacked PRs CLI – a command line tool that helps developers manage cross-PR dependencies. This tool also automates syncing and merging of stacked PRs. Useful when your team wants to promote a culture of smaller, incremental PRs instead of large changes, or when your workflows involve keeping multiple, dependent PRs in sync.