Getting Started with Terraform in DevOps

Software organizations that have started to adopt a DevOps culture should strongly consider implementing Terraform for managing their application infrastructure. Here's how and why.

DevOps is a philosophy of continuous improvement that seeks to increase the velocity and quality of software releases. It has become an essential part of modern software engineering, and you’re likely to find aspects of DevOps culture and process throughout the Software Development Lifecycle (SDLC).

People and processes are important, but having the right tools is also critical; Infrastructure-as-code solutions like Terraform are useful tools in achieving a software organization’s DevOps goals.

In this article, we’ll look at how Terraform can be implemented and utilized as a key part of the overall DevOps culture. Before jumping into the technical bits, it will be helpful to establish a solid understanding of the philosophies and goals that underpin DevOps.

DevOps: Philosophy and goals

Continuous improvement

“Continuous improvement” is something that comes up again and again when discussing DevOps. Small, consistent improvements over time add up to big outcomes. Consider the inverse: trying to make broad, complex changes all at once inevitably leads to failure and incomplete implementations.

Agile development is another excellent example of this pattern; take a look at any sprint board, and you will see discrete, well-scoped chunks of work that add up to a larger story. Trying to encapsulate all that change in a single effort isn’t going to be successful.

Collaboration

Another key element of the DevOps philosophy: Collaboration. It’s right there in the name; DevOps is a portmanteau of “development” and “operations.” Two teams that historically operated in very distinct silos within software organizations. Legacy software environments could get by with developers “kicking releases over the wall” for the operations and admin teams to figure out how to make work.

That simply won’t work in the modern software ecosystem. Most software applications live on the web, have 24/7 uptime, and see a constant stream of users. Releases potentially happen multiple times a day, so it only makes sense that developers and operations need to be in lock-step with a shared set of goals and practices. DevOps allows these teams to leverage automation, process, and culture to get great returns on their inputs without the massive overhead of legacy development practices.

When it comes to leveraging automation, that’s where the tools come in. Infrastructure as code tools have gone through several evolutions, from CFEngine and configuration management tools like Ansible, Puppet, and Chef. Terraform was released in 2014 and has had a significant impact on managing infrastructure at scale. Using Terraform for DevOps is a natural fit and aligns closely with its philosophies.

Infrastructure as code with Terraform

Infrastructure as Code (IaC) is a method of automating the process of provisioning and managing software infrastructure. Being able to define the desired configuration and scale of your infrastructure is a powerful abstraction that unlocks the ability to manage infrastructure like traditional software.

In particular, Terraform has some unique advantages that make it well suited to utilize within a DevOps initiative: broad adoption across the industry, great support and community base with lots of tooling, and a rich knowledge ecosystem.

Declarative syntax is another key feature. In traditional imperative programming, developers must manually define the logic and ordering of program flow. The code will generally execute in the exact manner and order as defined by the programmer. While this generally works well for traditional programs, defining infrastructure is a bit of a different beast.

Infrastructure as code tools are typically used to deploy infrastructure in cloud platforms like AWS, GCP, and Azure. These platforms provide APIs across multiple services that can be utilized to make informational requests, as well as to provision actual infrastructure. In larger, more complex infrastructure deployments, multiple calls to multiple APIs are likely needed, and there may be a specific execution order that is required to ensure functional systems.

In AWS, for example, provisioning an inline policy for an IAM role requires the creation of the IAM role first. At any level of significant scale, it would quickly become unmanageable to manually configure this ordering. However, with the declarative syntax of Terraform, users simply need to “declare” the desired infrastructure configuration, and the Terraform tool will automatically create(and destroy) infrastructure in the correct, most efficient order.

Finally, defining infrastructure with code means that engineering teams can take advantage of traditional software engineering toolchains for managing their deployment. Terraform code can be stored and managed in a VCS like Git. Infrastructure can be automatically tested and deployed using CI/CD pipelines. Terraform also enables modularity that makes critical initiatives like disaster recovery much easier.

Software organizations that have started to adopt a DevOps culture should strongly consider implementing Terraform for managing their application infrastructure. The next section lays out some important guidelines and key points for getting started.

Getting started with Terraform, technically

Purely from a technical perspective, the first thing anyone should do when learning a new tool or platform is to read the documentation! Hashicorp’s documentation portal should be a first and frequent stop for Terraform users. Basic Terraform usage and syntax is obviously important, but the primary goal in using Terraform for DevOps is tight integration with development workflows, deployment automation, and production application infrastructure.

Here are some key principles for adopting Terraform:

Start small

There will be a strong temptation to try and “Terraform all the things!” right away. Resist this urge. There is too much complexity to try and absorb and too much at stake with launching a new initiative. The ideal candidate for Terraform adoption is a smaller, decoupled part of the stack.

Don’t go it alone

Multiple engineers should be involved in the learning process. A shared understanding of Terraform’s best practices helps to prevent sprawl and minimize tech debt as usage grows. It also helps avoid the bus problem; a single engineer being the only one able to manage, configure, and troubleshoot infrastructure deployments means there could be serious issues if that engineer isn’t available, or worse leaves the company for another role. Software engineers that know how to configure and deploy their own infrastructure can also alleviate some of the DevOps teams workload too.

Version control and remote state

When first running Terraform, engineers will often configure and run Terraform directly from their local workstation or development environment. The state file is stored locally as a single file. This is ideal for learning but is ultimately a stop-gap for production-scale usage. Terraform provides several features designed to allow safe, effective collaboration. Remote state enables multiple engineers to share and work from the same infrastructure configurations, and workspaces allow the same configuration to be used for multiple environments(like dev and prod).

Automate

Another benefit of migrating away from local workstation runs is that it allows the use of CI/CD pipelines to automate the testing and deployment of more complex infrastructure deployments. Using a centralized CI/CD platform means everyone can have the same shared understanding of the state of ongoing infrastructure and application deployments. Like traditional CI/CD implementations, it also unlocks further automations like linting, documentation generation, and unit testing.

Modularize

Terraform has a feature called Modules that allow users to encapsulate a variety of resources into a single unit. This is one of the most powerful features of Terraform and is no small part of why it fits so well into a DevOps initiative. With modules, teams can define complex, opinionated infrastructure once, and re-use the same configuration over and over.

For example, a Terraform module could be created that would deploy a complete testing environment for a developer. Rather than having to manually create and destroy the infrastructure, they can simply call a pre-built Terraform module and provide a few configuration values, and they’ve got a fully-configured environment.

Terraform boosts your organization’s DevOps goals

DevOps is about continuous improvement and increasing software release velocity. With Terraform, software engineering teams have a shared, scalable definition of their infrastructure. Increased development and deployment velocity will be a natural conclusion of having modular, scalable infrastructure as code deployments. Software organizations that can adopt Terraform have access to a great tool to help unlock scalable, resilient application infrastructure.

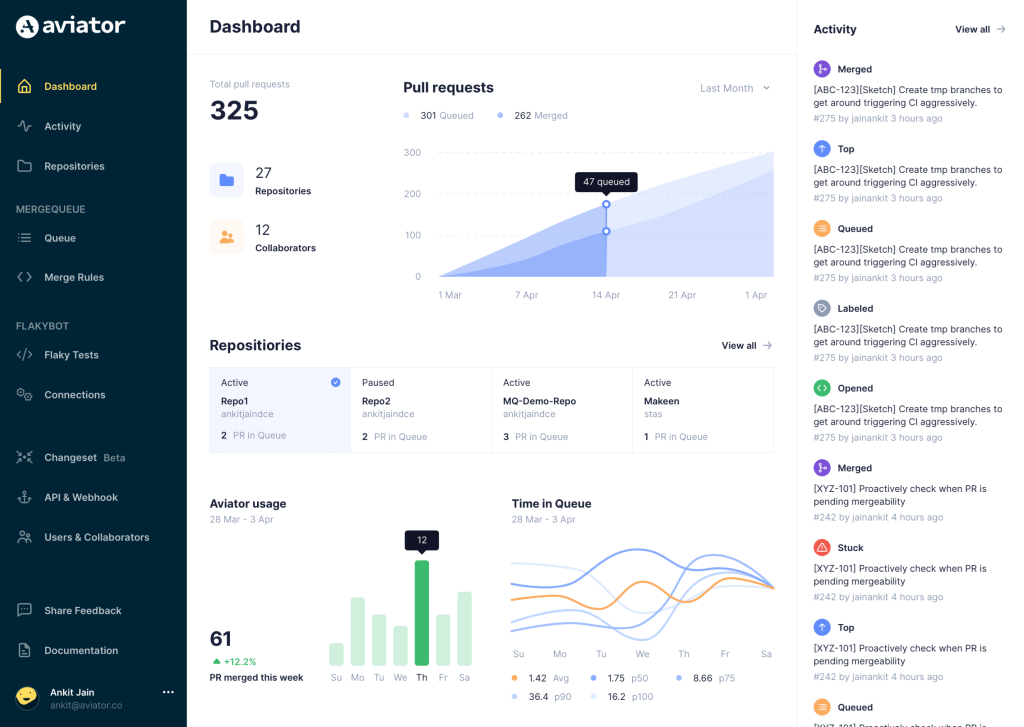

Aviator: Automate your cumbersome merge processes

Aviator automates tedious developer workflows by managing git Pull Requests (PRs) and continuous integration test (CI) runs to help your team avoid broken builds, streamline cumbersome merge processes, manage cross-PR dependencies, and handle flaky tests while maintaining their security compliance.

There are 4 key components to Aviator:

- MergeQueue – an automated queue that manages the merging workflow for your GitHub repository to help protect important branches from broken builds. The Aviator bot uses GitHub Labels to identify Pull Requests (PRs) that are ready to be merged, validates CI checks, processes semantic conflicts, and merges the PRs automatically.

- ChangeSets – workflows to synchronize validating and merging multiple PRs within the same repository or multiple repositories. Useful when your team often sees groups of related PRs that need to be merged together, or otherwise treated as a single broader unit of change.

- FlakyBot – a tool to automatically detect, take action on, and process results from flaky tests in your CI infrastructure.

- Stacked PRs CLI – a command line tool that helps developers manage cross-PR dependencies. This tool also automates syncing and merging of stacked PRs. Useful when your team wants to promote a culture of smaller, incremental PRs instead of large changes, or when your workflows involve keeping multiple, dependent PRs in sync.