Standardizing AI Coding Practices Across Your Engineering Org

Learn how AI coding standards prevent codebase fragmentation by turning individual knowledge into shared, reusable guidelines for both human and AI contributors.

Coding standards are the agreed-upon rules and guidelines that dictate how code is written. They typically apply to an organization, team, or codebase and cover coding patterns, naming conventions, and documentation.

These sets of rules tell any contributor to a codebase how and where code should be added, which helps the team remain consistent through numerous commits. Any developer should be able to pick up any part of the codebase and understand it quickly.

Coding Standards in AI Context

With the rise of AI agents and tools, coding standards are becoming increasingly important.

Nowadays, when you say “any developer,” it doesn’t necessarily refer to a human developer; it also includes AI participants. As a result, the definition of ”coding standards” needs to expand into “AI coding standards” to accommodate this new type of contributor. Otherwise, AI tooling can introduce a whole new set of patterns and practices in a blink of an eye.

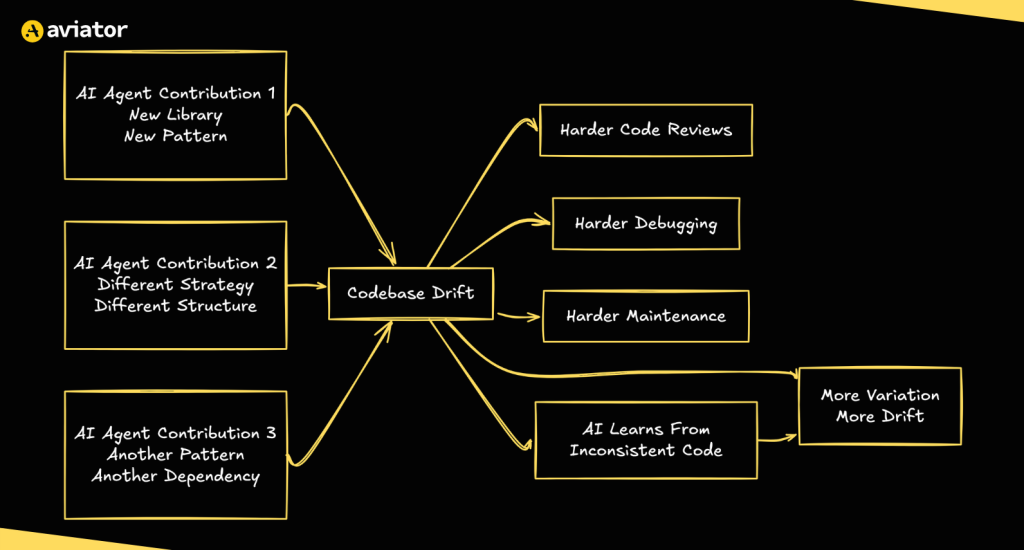

Troubles with Non-Standard Contribution

It’s not uncommon for two developers to produce varied solutions. However, when it comes to AI and the way these agents currently work, you can expect them to produce wildly different solutions each time. They have no natural guardrails (or common sense, for that matter), and since they keep ”learning” from existing code contributed by AI agents, the effect compounds to even more new variations over time. In every new instance, they can use different libraries, strategies, or patterns in structuring and adding code.

Codebase drift diagram

All this leads to contributions and a codebase that can be very hard to review, debug, and maintain.

Standards Are the Key to Success

The evolution of traditional code formatters, linters, and style guides has taught us that standardization creates the guardrails that allow contributors to focus on delivering value.

Of course, these guardrails are just as important for AI agents, if not more. When they are clearly stated, onboarding contributors and reviewing contributions becomes much easier. These guidelines extend beyond formatting and linting to also include more complex rules that dictate development processes.

Together, they form a central set of conventions based on the best practices that were already part of the existing codebases. Between humans, these conventions could live in a non-explicit form of company culture or habits. However, when we start to write them down, they become more explicit, which enables evaluations and iterations. They become a living part of the codebase and can be used by any contributor.

The Challenge of Collaboration in AI-Assisted Development

When AI first entered the workplace, it was in isolation. Developers experimented with prompts, coding assistants, and other tools to develop their own workflow.

However, all this accumulated knowledge of individual developers comes with a major drawback: it remains local. In fact, the flow that feels productive for an individual is only helpful for that particular individual.

At first glance, this may not seem like a big issue, since our coding standards maintain the status quo. Still, it does create inconsistencies that surface every time an individual contribution is added to the shared codebase.

From Individual Knowledge to Collective Context

Individual developers build their own mental model of the codebase over time. It contains the past decisions, legacy quirks, and other undocumented knowledge.

Our industry has found a solution to consolidate all the individual decisions through automation: linters, formatters, and the checks we use in CI/CD pipelines. They preserve developer freedom, while enforcing a single way of doing things.

The same logic applies to AI coding practices. Breaking them out of isolation into reusable models has created more predictable output and increased their shared value.

Here’s a quick comparison between individual and collective practices.

| Aspect | Individual Knowledge | Collective Context |

|---|---|---|

| Where it lives | In a single developer’s head or personal workflow | Codified in shared standards, linters, CI/CD pipelines |

| Visibility | Local and siloed | Accessible to all contributors, including AI agents |

| Consistency | Varies per person | Enforces a single way of doing things |

| Scalability | Low or not scaling at all, every person has their own mental model | Scales across teams, repos, and future contributions |

| AI compatibility | AI tools inherit individual quirks and gaps | AI agents follow explicit rules |

| Improvement | Personal effort | Feedback loops that benefit all |

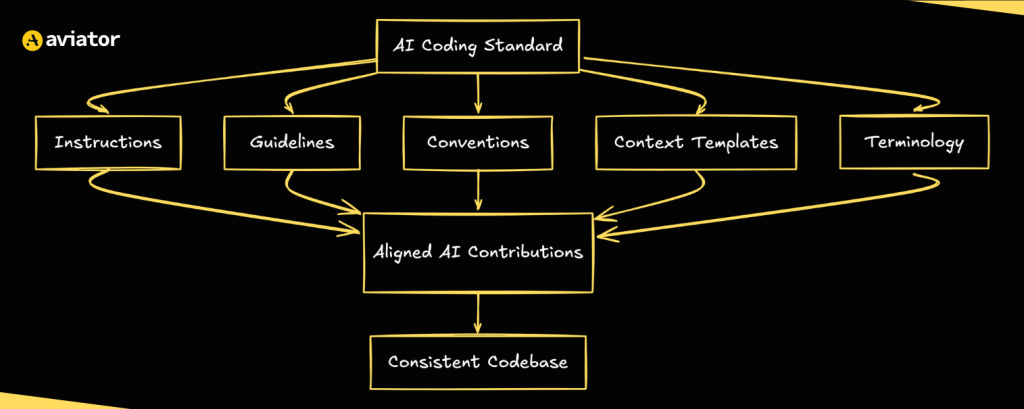

Components That Make Up a Standardized AI Coding Standard

The living document that makes up the coding standard is not a single file or instruction. It’s a collection of parts that define the standards together.

AI Coding Standard diagram

Each component has a specific goal within the context of standardization.

Instructions

They define how to behave in certain scenarios within a development process, for example:

- how to structure a commit;

- how to deal with dependencies;

- how to handle a breaking change.

Instructions are specific and actionable.

Guidelines

At a higher level than instructions, guidelines provide architectural or coding principles. They represent a set of general directions that instructions alone might not have anticipated. This helps in covering edge case scenarios.

They create space for an AI agent to provide clever solutions while aligning with the overall direction.

Coding Conventions

Coding conventions are the traditional tools we use to converge our code to a certain standard. They contain the way we name, format, and structure contributions. Typically, this is the domain of linters and formatters, but they are just as readable by AI agents.

This useful feature works out of the box.

Context Templates

These contain specific information on a small scale, such as a module. For example, they describe the module’s purpose, as well as its dependencies, limits, and past decisions. The goal is to align local changes and make sure they fit within the global architecture.

Basically, this is the mental model of a developer put in writing.

Specific Terminology

Terminology is a language tool that allows us to think and communicate consistently.

While humans generally learn to adapt to domain knowledge, jargon, and abbreviations, clear terminology definitions provide valuable knowledge that connects code to the outside world.

Quality Requirements

Before we contribute our code, we make sure that it meets the quality standards we’ve established, such as passing test cases, meeting coverage thresholds, and adhering to accessibility guidelines, to name a few. Since these requirements are easy to measure, including them in the contribution workflow doesn’t require too much effort.

An AI agent can also rely on these requirements to determine when a task is completed.

Feedback Loop

As mentioned earlier, AI coding standards are a living document; as such, they should evolve alongside the code. A growing codebase means that new patterns evolve, edge cases emerge, and conventions and standards potentially shift.

Every contribution, whether automated or manually added, creates an opportunity to reflect on and refine the state of the standards. This can include removing outdated guides, adding new directions, or expanding the codebase with additional pieces of context or terminology over time.

Together, these components form the system that contributors can rely on when working with the codebase. By making previously implicit agreements, contexts, and principles explicit, these standards enable ongoing review and improvement.

Let’s quickly summarize them.

| Component | Purpose | Scope |

|---|---|---|

| Instructions | Define how to behave in specific development scenarios | Commit structure, dependency management, breaking changes |

| Guidelines | Provide higher-level architectural and coding principles | General direction, edge cases |

| Coding Conventions | Converge code to a consistent format and structure | Naming, formatting, structuring |

| Context Templates | Describe module-level details like purpose, dependencies, and past decisions | Local changes aligned with global architecture |

| Specific Terminology | Ensure consistent language across the team and codebase | Domain knowledge, jargon, abbreviations |

| Quality Requirements | Set measurable thresholds a contribution must meet | Tests, coverage, accessibility guidelines |

| Feedback Loop | Evolve standards alongside the codebase over time | Removing outdated guides, adding new context or conventions |

Learn Once, Apply Everywhere

Without standardization, deliveries at scale run an exponential risk of creating fragmented, inconsistent patterns at every turn. However, standardization flips that risk on its head: the more consistent your standards, the more consistent the output.

Also, all the improvements to guidelines benefit everyone who uses them. This goes for all future contributions, as well.

When to Use It

When applying AI coding standards, you may expect that a greenfield project is the best foundation, but it’s quite the opposite! Existing projects with established patterns and practices offer an excellent starting point, simply because there’s more context to build upon.

Any brownfield codebase already contains the core parts of standardization, such as formatting and linting rules, documentation, tests, and existing code patterns. The main challenge is to extract this knowledge and formalize it into clear, actionable standards. However, there’s another advantage of the existing collaborative mindset: code reviews and sharing best practices are already part of the culture.

Even small-scale projects or collaborations immediately benefit from AI coding standards. Consistency pays off over time, and with clear guidelines, even a sole developer can command a virtual team of AI agents!

Example

I’ve got a repository of a project that hasn’t been touched in over 4 years. It’s a perfect example of some legacy code, low on documentation, that hasn’t been upgraded because it works (but there’s little knowledge on how exactly it works).

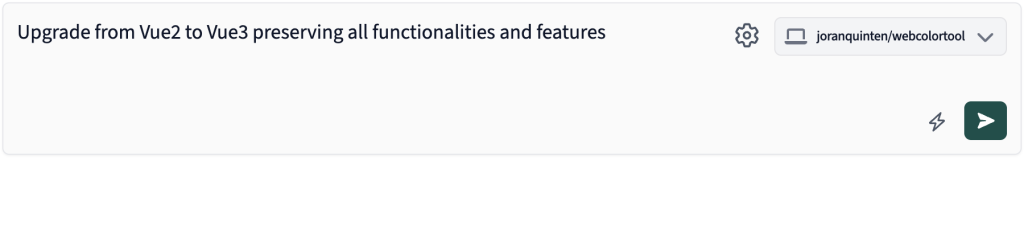

Instead of manually going through the codebase, I start with a simple instruction in the Runbooks interface:

Instruction in the Runbooks interface

That’s all I’m going for. We can refine the command later on, but for now, I just want to show how it can take vague instructions and turn them into concrete actions.

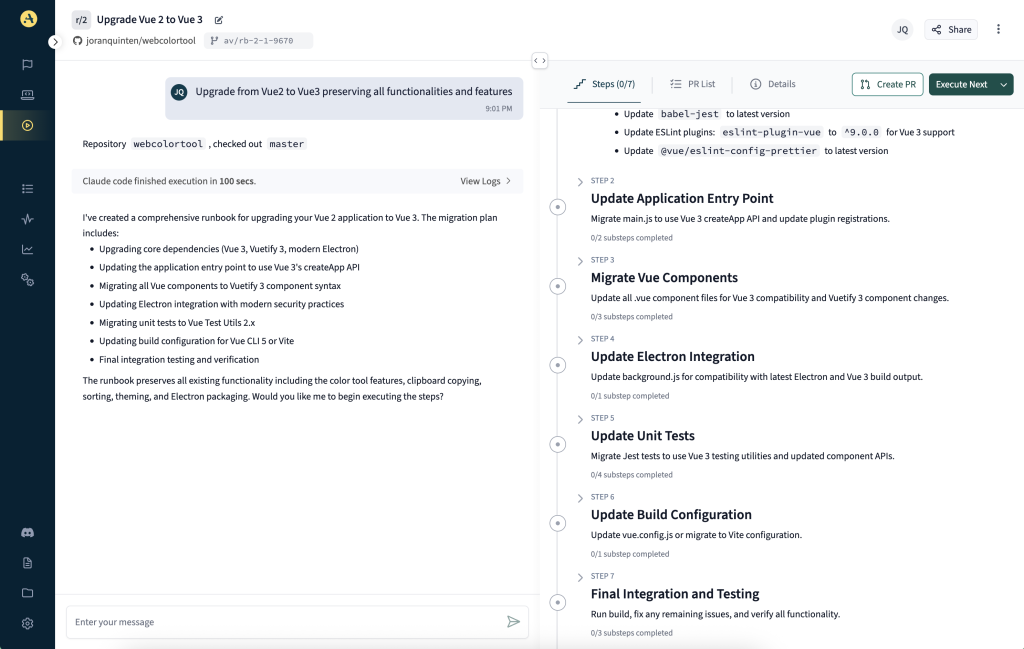

After a few moments of Aviator ingesting the codebase, it generates a Runbook with seven steps toward the goal, each involving several major (breaking) changes:

Runbook interface

Out-of-the-Gate Standardization

What’s interesting about this generated Runbook is that it standardizes the steps right out of the box, clearly distinguishing what to do, in what order, and according to which guidelines and conventions.

It prioritizes core packages before supportive ones, removes outdated packages from the project, and sometimes replaces them with a contemporary counterpart, favoring current standards over legacy decisions.

Resolving Issues

The project both builds as an Electron app and deploys to Netlify. In this case, with an outdated environment and lack of documentation, Aviator wasn’t aware of any Netlify-specific requirements. This exposes a big gap in domain knowledge, which I was able to resolve through interacting with the agent.

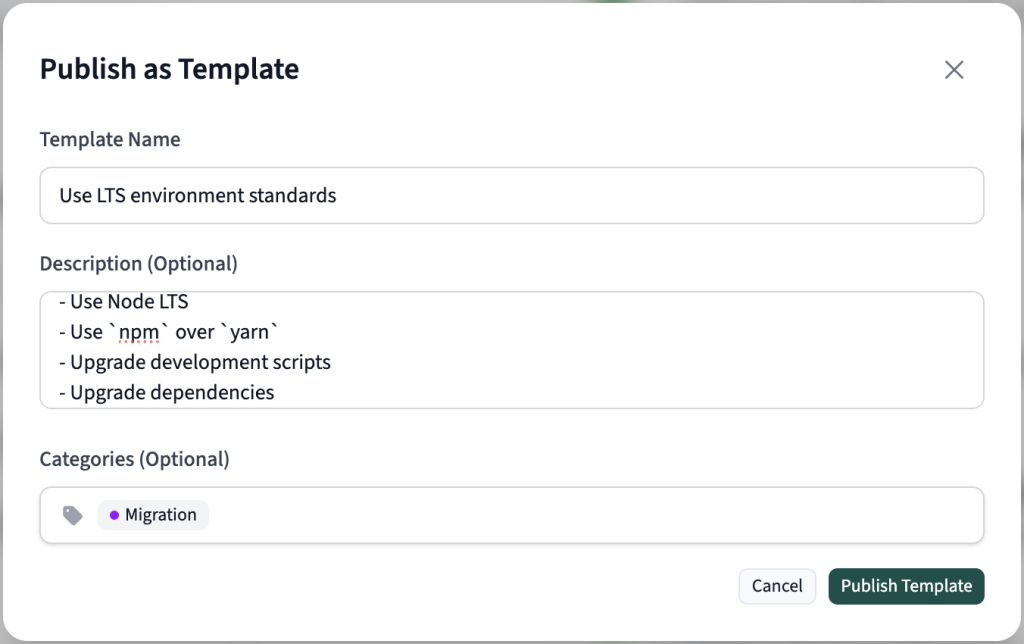

The main issue for Netlify was the outdated Node environment, an excellent example of how requirements can shift on your development platform. I added the requirement to use Node LTS.

As you can see, standardization is already happening in the process. It’s just a matter of interacting with the agent and feeding it new requirements to adopt. This is very much how real-world scenarios would play out: discovering requirements and implementing them for the future.

Standardization

The real power comes from being able to share and reuse proven Runbooks. Aviator makes it easy to turn this (or any other) Runbook into templates that can be applied across other repositories:

Create a template for a Runbook

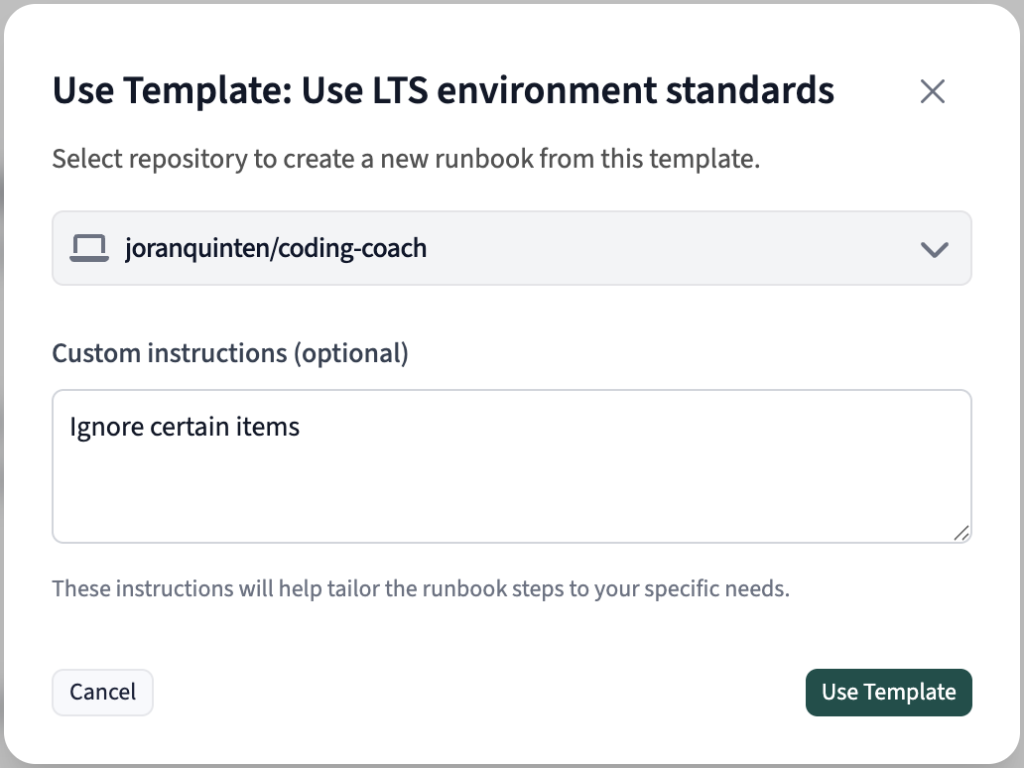

Reuse

We can reuse the existing standards across other repositories in the organization. There’s also the added flexibility of manually skipping or adjusting specific items in the template.

Adjusting the Runbook template

The migration decisions and standards I defined on one local project become shared guidelines that both human and AI contributors can use and build on.

Who Owns the Standards?

The concept of ownership of non functionals is something we’ve been working on, as well.

Typically, senior developers, tech leads, or platform teams take the responsibility for owning the standards. The same applies to AI coding standards: someone has to be responsible for guiding the principles.

These standard maintainers are in charge of resolving conflicts when they arise. They should constantly look for improvements and, ideally, ways of measuring impact and success. That feedback goes directly into the next round of improvements.

Escape Hatch

No matter how well thought out or designed a standard is, it can’t anticipate every new situation. There will be times when you want to bypass or temporarily ignore the guidelines. A proven strategy would be to annotate where a bypass was applied and why.

This will mark code as “non-standard”. It will be clear that it shouldn’t be adopted as a recommended pattern and that it might be fixed in the future.

How to Adapt

As you have seen, AI coding standards are not a wide departure from our existing mode of operation. They are a natural extension of the way we’ve always worked because they give us an opportunity to think about our unspoken rules and culture and capture them into writing.

AI coding standards help remove friction from core processes and let people focus on the decisions that really need human creativity and judgment. The goal is to build value, and the standards and guidelines outline how to get there.

Frequently Asked Questions

How do AI coding standards prevent codebase fragmentation?

AI agents learn on existing code and operate on patterns. This means they can introduce wildly different libraries, patterns or structures. On scale, such issues only compound. Standards serve as guardrails that steer behaviour and prevent such issues.

Can solo developers benefit from AI coding standards?

Yes! Even though imposing rules on your own playground sounds cumbersome, the truth is, standards allow you to orchestrate multiple AI agents efficiently, achieve durable consistency, and ensure quality in the long run.

What if an AI agent or developer in the process needs to deviate from standards?

That’s a common issue as no process is perfect. But it needs to be documented, so it’s clear it’s not a pattern. If it’s really necessary, make sure to comment the reason and the location of such bypasses. And yes, this step should be part of standards, too.