FlexReview – A flexible code review framework

Code reviews have evolved significantly over the years, and in 2024, they’re more crucial than ever. Engineering teams are working remotely in different time zones, and large pieces of code written by AI. Yet code reviews are one of the common areas of frustration for large distributed teams.

First Principles

Contrary to popular belief, code reviews are not an efficient mechanism for detection bugs in. Research from Microsoft and IEEE show that only 15% of comments hint a possible defect. Manual and automated testing are better at finding real defects.

However, code reviews excel at:

- Knowledge Sharing: A code review is narrating your thought process behind implementing a feature, sharing your idea. Code reviews help ensure that the new team members get familiarized with existing patterns, and reduce bus factor.

- Code Quality: We share better practices of building high quality software. The author learns about better practices from the reviewer and the reviewer can also learn this by reviewing high quality code.

- Consistency: Following similar patterns across a large organization can help increase efficiency, as the code becomes a lot easier to follow, uses familiar patterns, and is well documented.

Codeowners today

The CODEOWNERS feature allows you to specify individuals or teams who are responsible for certain code paths in a repository. This way, they are automatically assigned as reviewers for any code change that modifies the respective code path. This concept doesn’t scale well for large teams. Here’s why:

- Whether it’s a change in import statement, or a 1000 lines feature work, the rules are the same

- Small number of reviewers can get overwhelmed with most of the reviews

- There’s no context of domain experience, all reviewers are considered alike

- The

CODEOWNERSfile can be hard to maintain, becomes too brittle over time - Many times reviewers only want to track changes vs take time to properly review the PRs

- No way to set more complex requirements, for example, there’s no way to set permissions if you want review from both product team and security team.

But also code review assignment is messy:

- Hard to know who to assign reviewers in other teams

- It won’t consider out-of-office schedules / time zones of the reviewer / reviewee.

- It won’t allow assigning primary / secondary reviewers for the training purpose.

- It won’t consider the review load of the reviewer. Some people already have too many PRs to review.

The key challenge with Codeowners feature is that it tries to define ownership, reviewers and gatekeeping all in one file.

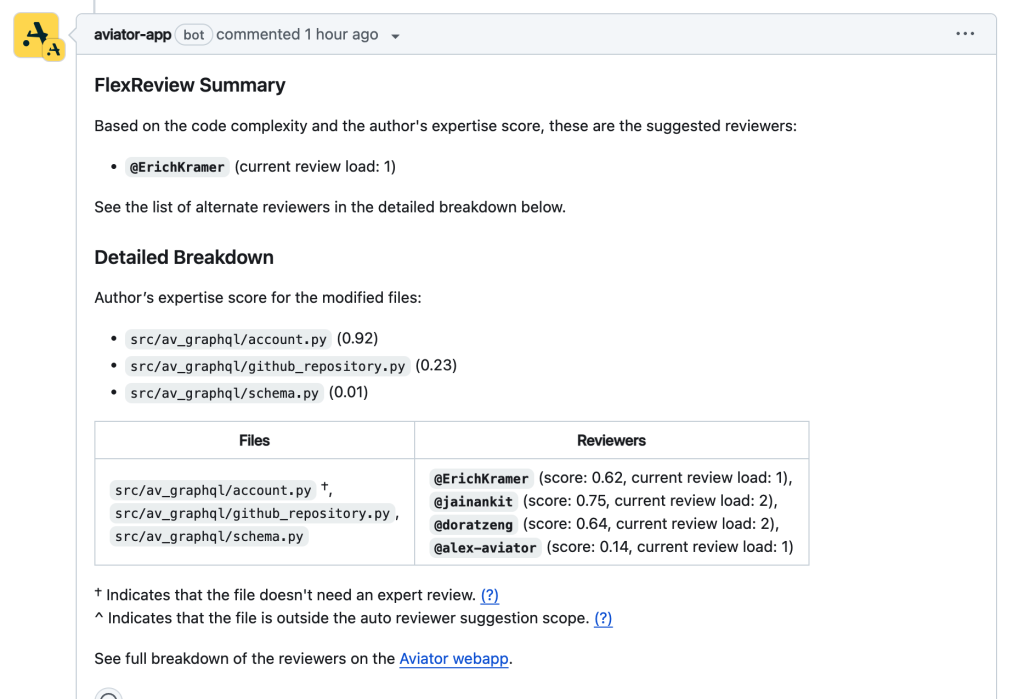

Introducing FlexReview

FlexReview introduces flexibility in your code review process by understanding that every code change is different, and every reviewer is different. Instead of defining fine-grained static codeowners, it analyzes past data of code review patterns to calculate an expert score for every file and every developer. It uses this score along with reviewer availability, workloads and the complexity of code change to determine the right reviewers.

There are four core capabilities:

- Dynamic reviewer selection for each code change

- Validation of the approval requirements for merging the change

- Track changes that requires your attention as a reviewer or an author

- Track first review response times (SLOs) and set up automated actions

Domain expertise score

FlexReview calculates a “domain expertise score” for each package / file path. Think of domain expertise as a dynamic alternative of defining code ownership, these are folks who understand those pieces of code well and are capable of providing meaningful feedback.

The domain expertise score is calculated based on:

- past code changes reviewed by the developer

- past code changes authored by the developer

- frequency of change for that code path

- existing static CODEOWNERS config file (If present)

These scores are then normalized, and based on these normalized scores developers are qualified to be code reviewers for specific code paths.

Code complexity

Similar to domain expertise, FlexReview also calculates code complexity in PR to suggest reviewers. Understanding the code complexity itself is a complex problem, FlexReview takes a few factors into account to understand the complexity:

- the number of lines of code

- whether file has import changes, or comments modified

- simple refactors

- modifying README and other non-code files

The complexity is calculated at every file level, because a large change can still have several files that have low complexity, and may not need an expert reviewer.

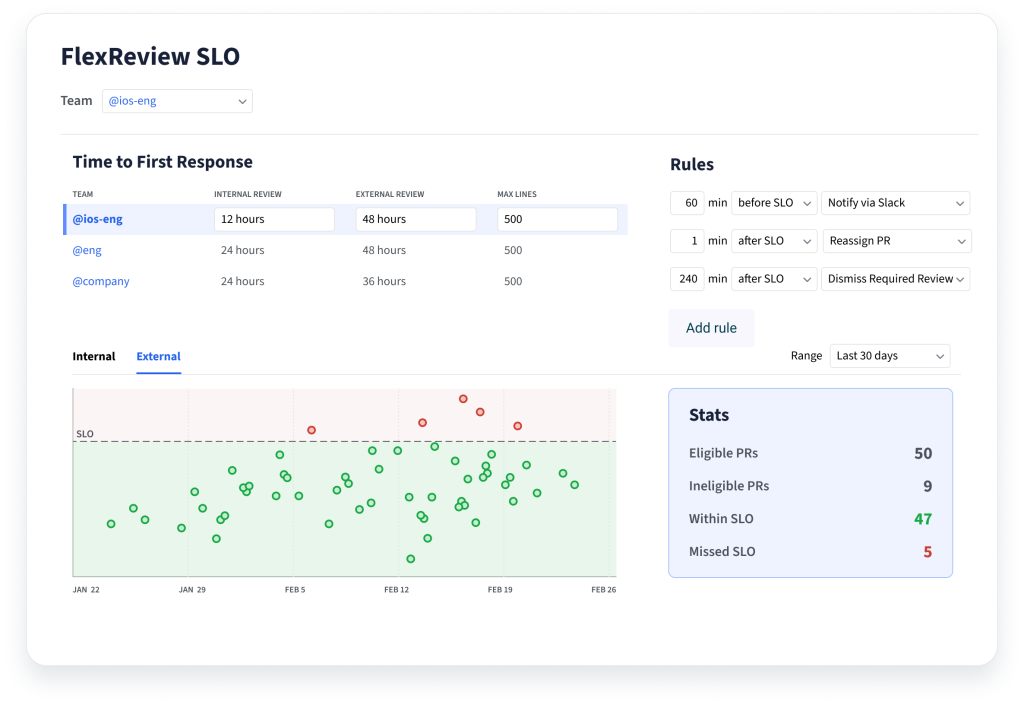

Code review SLO

Review SLO (Service Level Objective) is the suggested time it should take the reviewer to respond to a PR. Note that this is NOT the time it takes for a PR to be approved, but rather just getting the first response back. Studies have proven that reducing iteration time for developers has a huge impact on developer productivity.

Think of SLO as an agreement between the author and the reviewer for the response on the code review.

However, to make sense of SLO data, there are a few things to consider:

- Not all code is the same, reviewing a 10 line code and and 1000 line code cannot be done at the same scale

- Teams work in different timezones, or different business hours

- Internal team reviews are generally faster than external reviews.

With those in mind, FlexReview SLO lets you define response times within the code size limits. For example, a code change with less than 400 lines should be reviewed within 1 business day. It tracks time zones and business days to get a clear picture. This incentivizes authors to create PRs that will fall into the SLO agreement (smaller changes), thereby also improving the ability to review the code, and the response times.

How it all comes together

All these factors are used to calculate the final reviewer suggestions and then validate the approval requirements. Low complexity files or experienced authors may not need expert reviewers, while high complexity files or inexperienced authors do. That combined with the availability and load helps identify suggested reviewer candidates.

Reviewer assignment

FlexReview defines a few goals while calculation reviewer suggestions:

- Reduce the total number of reviewers needed for each code review. Having fewer reviewers help reduce iteration time

- Knowledge sharing – expand the suggested pool of reviewers wherever applicable

- Ensuring code owners requirement is satisfied, if it is enforced

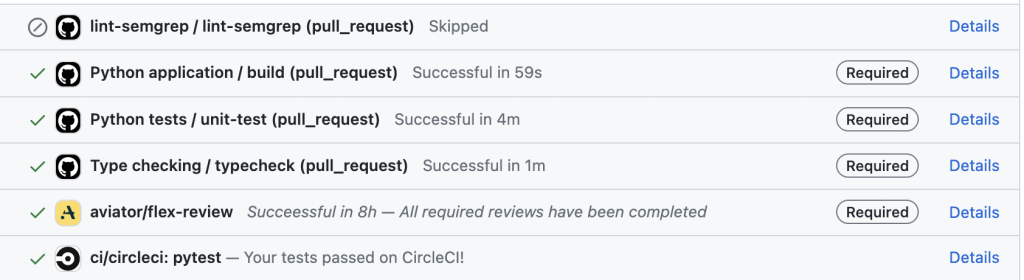

Validation

For validation, it provides a GitHub status check. So instead of using CODEOWNERS review requirement from GitHub, users can restrict the merges on this required status check validation.

The validation uses the same concepts as the reviewer assignment to calculate a list of valid reviewers. There are three possible values for this status check:

- Success: All required reviewers have completed the review, and the PR is ready to merge.

- Pending: Waiting on review from some required reviewers. Once those reviewers review the PR, the status will automatically change to success.

- Failure: If the current list of assigned reviewers of the PR do not meet the approval requirements, the status check would report a failure. Changing or adding reviewers triggers a recalculation of this logic.

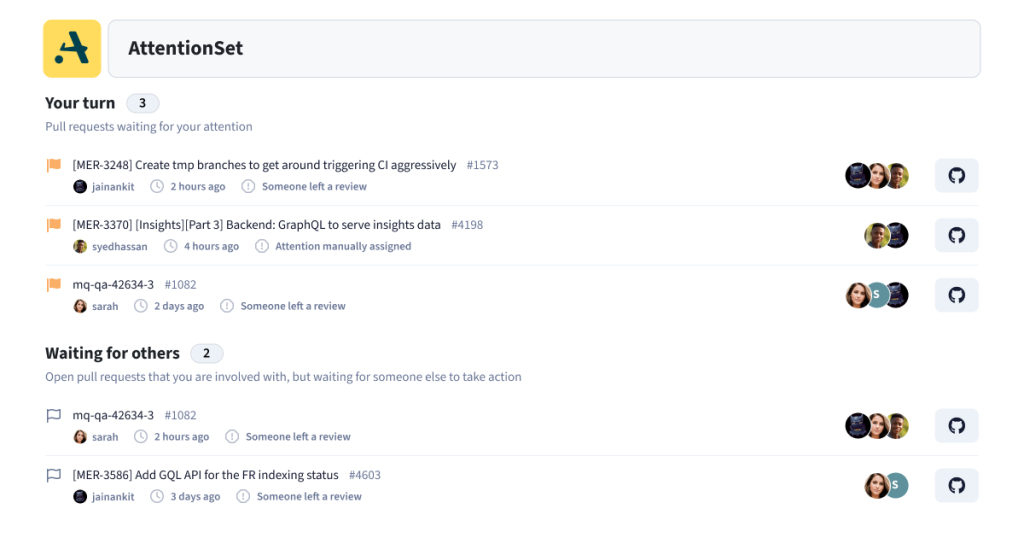

AttentionSet

Inspired by Gerrit, AttentionSet keeps teams informed about PRs needing attention. Every time there is an action by a user or a system, AttentionSet tracks that activity and checks if the author or a reviewer may need to be notified. But instead of notifying immediately, it adds the PR to the list of PRs needing attention. By having a singular place for all PRs needing attention, it minimizes interruptions during the flow state, while still making sure that developers can easily track changes needing their actions.

AttentionSet follows very similar rules as explained by Gerrit, and it then states the reason for attention assignment. Following reasons may be assigned for a getting attention:

- A code review has been assigned to you

- A reviewer commented on the PR authored by you

- A reviewer approved a PR authored by you

- Someone manually assigned the attention to you

- A required status check is failing on the PR authored by you

And likewise there may be PRs associated with you but are waiting on actions from others:

- A PR is waiting for the reviewer’s comments

- A PR you reviewed is waiting on the author to take an action

- You manually removed attention from this PR

- The PR is in queue waiting to be merged

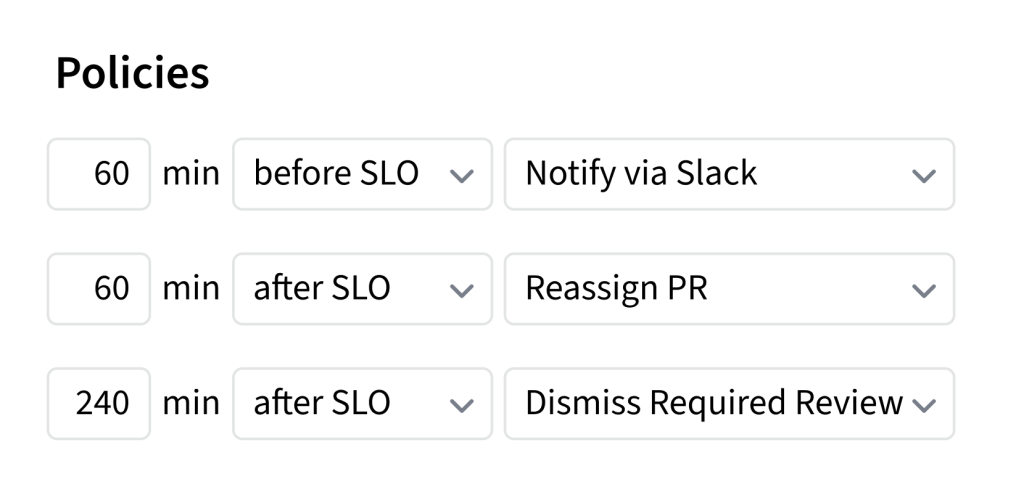

SLO automated rules

After understanding the SLOs, it’s easy to imagine how it ties with the AttentionSet. Now developers can track the response times of their PRs, or the ones requiring their attention and act on them. Every team can also track how they are performing on the review SLOs, and analyze P50, P90, P99 for times for first review response on a PR.

Additionally, teams can configure some automated actions based around SLOs. For instance, one can configure to send a Slack notification to the reviewer if we are about to miss the SLO for a particular PR, or one can configure to reduce the review requirement so that anybody can review the PR instead of the required reviewer. These automated rules can help introduce better practices for code reviews over time, and improve the overall response times.

Getting started

Moving away from strict controls of Codeowners concept is a big cultural change. To ease the transition and familiarize your team with FlexReview, you can start with one specific directory in your repository. By selecting a single directory, you can test and evaluate the functionality of FlexReview in a controlled environment before expanding its usage to other parts of your repository. This approach allows you to gradually adapt to the new review process while minimizing any potential disruptions to your existing workflows.